Macrosoft seek to achieve high levels of automation in finding and fixing coding errors during the entire life cycle of a development project. We seek to achieve 3 objectives over a diverse range of system applications and languages and for new and emerging application architectures, by implementing internal coding and unit test standards and toolsets.

Macrosoft is committed to providing our clients with Coding and Systems that are:

- Reliable: High Quality Bug-Free Coding Standard

- Secure: Thoroughly Tested to be free of Security Vulnerabilities

- Maintainable: Catching and fixing code smells, and making modular and portable code that make it easy for our clients to maintain and upgrade the code we build for them

We seek to utilize a powerful toolset of purpose-built analysis engines to detect bugs, security vulnerabilities and code smells. We seek tools that find as many errors as possible and provide our developers with ways to fix the errors quickly and efficiently. We are now using a number of tools in parallel to accomplish the best possible result. We have trained experts in every tool we use to assist our individual developers.

We are constantly evaluating new standards and enhanced tools that provide new or better error coverage capabilities and/or better assist in providing re-mediation guidance. Our goals are to continually improve our coding processes, reducing coding errors and using ever more automated tools to speed up our coding processes and achieve best-in-class coding standards, as new programing languages, frameworks and tools are emerging.

This paper describes the automation tools and processes that we use to accomplish these 3 objectives. The paper is not intended as a technical compendium of all our coding standards or of all the ways we use our coding analysis tools to improve the quality of our coding.

Delivering buggy software erodes our company’s reputation and our clients’ confidence, so this work is of paramount value and importance to our company.

Introduction

Achieving the above 3 objectives is always a moving target for any large development organization. We are constantly evaluating new and enhanced tools in the marketplace to see if they provide a sufficiently compelling benefit/cost ratio to add them to our toolset. In addition to new tools, there are always new coding methods and standards, and application architectures, and we need to ensure the tools we use will work well in these emerging cases as well.

With over 350 developers in the company, and over 30 distinct development projects underway at any given time, this challenge is a super big deal for us. While there are many ways to code something and different people often prefer one way over another, the fact of our business is that we need to have all our programmers adopting the same tools and processes so that at the end of the day we come out with basically the same coding standards and best practices across all our new systems and products.

The benefits of us spending a lot of time and effort on continually improving coding standards and error checking are twofold. Automating our coding processes by a further 5% in a year will lead to a ~2.5% improvement in our margins which is of course a big deal to Macrosoft’s performance. That 5%/yr. is our going target for annual automation improvements in our coding processes.

On the other side, improving quality of the coding we deliver to our clients by 5% (as measured by UAT and post- implementation defects found) is outstanding to our clients as well, for several reasons including benefits in system performance, standardization and maintainability as well as audit and compliance and extensibility of the systems we deliver to them. And most importantly is the fact that the system has been checked intensively for any security breaches or vulnerabilities.

So, both metrics – automation and quality – are critical to our best practices in coding, both for us and our clients. We work hard to continue to improve on them continuously. There is almost nothing more important to our company!

In about half of our projects we are subject to the tools and standards set by our clients and of course in those cases we follow exactly what the client desires. For the other half we are either building out a new product or the client lets us set the development processes and standards. In the latter case of course, we follow all the best practices we will discuss in this paper. Even in the former case, upfront in such client-driven projects, we discuss all processes and standards we would like to use with the client and try to mold our work to achieve the best possible outcome from our development team’s work. In almost all projects we end up using the same set of tools and processes for the most part.

As noted earlier new and improved versions of commercial and open-source products and frameworks with better coding standardization and error checking methods are coming out all the time. And in line with these enhanced tools and frameworks there are new methods and procedures for injecting the outputs of these tools into our development processes. So last year’s best tools will not suffice for this year and certainly not next year.

As a result, we have our best developer teams constantly assessing and evaluating the newest versions and the newest tools available in the marketplace. Finding errors earlier and adhering to standard best practice coding standards is critical to the success of our company. [1]

We are convinced that no one tool will do the entire job for us, across our diverse set of applications and application architectures. So, we have a growing set of tools that handle different scenarios and different types of coding and provide different warnings and outputs for our developers to work with. What is the ideal combination to run in any particular circumstance? The answer is of course changing rapidly with time as new and better tools are being released and new and better development methods are coming into being. However, the short answer is we don’t know the optimal set of tools to run and in what order. But we do know that it will be more than a single tool for sure.

Yes, running more than one tool is more expensive and time consuming than running a single tool and leaving it at that. But our cost benefit analysis to date indicates strongly that several tools in combination (either in series or in parallel) is much more cost effective, leading to much better automation and performance of the coding process and leading to much higher quality of our delivered code.

We expect to continue using a combination of tools. The different tools have different sweet spots and are finding different types of errors. Yes, there are some overlaps but by and large there are a high percentage of errors found only by an individual tool. Maybe down the road a single tool will do it all, but that is not the case today. One point to keep in mind for us – we have over 350 developers and growing and we so we can afford to dedicate one development manager[2] for each of the tools we use, which speeds up tremendously the outputs we can get from each tool. The bottom line for us is to continually seek out and use the best-in-class set of code analysis and testing tools to identify and fix all the possible errors.

Coding Process

We pick up the coding story at the point our individual coders on a project have completed their module development. [3] The code then goes through one of our development leads for review. This can involve multiple cycles back and forth depending on the coding standards and requirements until the code is satisfactory to the development lead.

It is mainly during this process that we engage our standardization, quality and error-checking automation tools to sift through the code in detail and find any and all errors, warnings, and standardization checks. Each individual coder must respond to each and every problem and issue uncovered by these automation tools.

The development lead will not sign off on the coder’s work until she/he is confident that all issues uncovered by the tools have been rectified properly. This process step constitutes our major line of defense in assuring high coding standards are achieved, and most of the easy and moderately difficult coding errors are eliminated and do not further complicate system testing down the road. This step is also critical to eliminate any security vulnerabilities in the code. Once the code has gone through this iterative process, it is ready to be stitched together with other modules and the testers (working with the developers) can start detailed testing of the system.

One other step in the coding process that is done in some our projects but not all is unit testing, done by the individual developers themselves on the modules they have developed and before they are delivered for stitching together into the system. Done well, this is obviously a really good step to include, reducing errors in the coding by a large degree, but it also adds significantly to the cost of the system, so there is cost-benefit tradeoff to be made. For each project we work with the client to discuss this tradeoff in detail and determine if and to what degree the client wants to include that step in the development process.

Current Toolset

There are currently 4 tools in our coding analysis toolset. Not all 4 are used in every development project and two of them are new and we are still getting to know their full benefits. The four tools are Veracode, Resharper, CodeGrip, and SonicQube. We current use Veracode and Resharper extensively in our development projects. We are engaging the other two tools in selected projects to see how they perform. This section describes what we are looking to get out of each of these code quality tools.

Of course, the top priority we are looking for in our toolset is uncovering any and all security vulnerabilities in the code. The top tool we use for this is Veracode. The second area has to do with improving the quality of the code over a range of quality factors we list below. For this part of the equation we are currently using Resharper. A third area of code improvement options we are looking for is code smells and for this we are evaluating CodeGrip. A final tool we are currently evaluating for inclusion is our toolset is SonicQube, which overlaps in all three of the areas noted above. So far, we are finding it to be great over the extensive range of coding problems it identifies and in providing our developers with concrete methods to fix the problems uncovered.

A couple of points are worth noting up front. First, we have developer managers who are expert at each of these tools and are available for individual developers to consult with in real time to discuss issues and outputs from the tools. But the individual developers are expected to quickly learn the basics of the tools and be able to utilize each of these tools themselves, and in fact we expect the developers to begin to follow the coding standards underlying these tools so their problems and errors become less prevalent over time. In addition, we seek tools and solutions that provide actionable remediation guidance, with code samples and interactive training reachable through the developer’s toolset. Now for a brief review of each tool.

Veracode

Veracode is rated as market leader in enterprise-level static application security testing (SAST). It is our mainstay tool for identifying security vulnerabilities in our custom code.

SAST analyzes apps to identify coding and design conditions that indicate security vulnerabilities or weaknesses. Veracode allows us to customize and fine-tune the tool according to our coding standards which reduces the number of false positive and uninteresting results. This is critical to preventing our developers from engaging in wild goose chases. Also, Veracode categorizes vulnerabilities according to severity and probability of happening and thus provides a priority order for us to handle vulnerabilities.

In addition to our customer code, Veracode can test 3rd party commercial software, API source code and it can examine container security. It can also test fuzzing wherein we provide the app with unexpected inputs to identify potential security leaks such as app crashes and memory leaks or buffer overflows. Veracode can be integrated with SDLC which is very critical for DevOps environments.

We also use Veracode for eLearning to build developers’ confidence in application security by providing the knowledge and skills they need to find and fix flaws earlier, lowering the cost of expensive security flaw remediation.

Resharper

ReSharper helps us improve our coding productivity by providing a robust set of features for automatic error-checking and code correction that cut development times and increases our coding efficiency. The table below shows some of Resharper’s main features:

| Category | Description |

| Code quality analysis | With its design-time code inspection, ReSharper tells you right away if your code contains errors or can be improved. |

| Fixes to detected code issues | ReSharper provides quick-fixes to eliminate errors and code smells automatically. |

| Project dependency analysis | ReSharper builds project hierarchies and visualizes project dependency diagram. |

| Type dependency analysis | ReSharper can quickly analyze different kinds of dependencies between types in your solution. |

| Navigation and search | You can jump to any file, type, or member in the codebase in no time. |

| Decompiling third-party code | An integrated decompiler lets you navigate to code in referenced assemblies. |

| Code editing helpers | Multiple code editing helpers including hundreds of instant code transformations. |

| Code generation | You don’t have to write properties, overloads, implementations, and comparers by hand: use code generation actions to handle boilerplate code faster. |

| Safe change of your code base | Solution-wide re-factorings to safely change the code base. |

| Compliance to coding standards | Code formatting, naming style assistance, and many other code style preferences. You can get rid of unused code and ensure compliance to coding standards. |

| More features | ReSharper provides a lot more features including extensible templates, a powerful unit test runner, and more. |

| Extensions | ReSharper extensions include full-fledged plug-ins, sets of templates, structural search and replace patterns, and more/ |

| Command line tools | You can run code inspection, find code duplicates, or clean up code with standalone command-line tools. |

As a result of Resharper’s features our developers spend less time on routine, repetitive manual work and instead can focus on the task at hand. Resharper provides a robust set of features for automatic error-checking and code correction. This cuts development time and increases our developer’s coding efficiency. ReSharper quickly pays back its cost in increased developer productivity and improved code quality.

CodeGrip

We are now evaluating CodeGrip for analyzing code smells in our coding repositories including Github, BitBucket, and other platforms. It quickly displays the number of code smells it finds and also displays the likely technical work required to remove each smell. CodeGrip helps our developers and development managers to classify and resolve code smells easily one at a time. It also organizes each code smell based on severity and time to resolve, so our developers can schedule and solve these issues according to their severity and level of effort to fix.

Code smells can be present even in code written by experienced programmers. It is a big problem because it can reduce the lifetime of the software we build and makes the code difficult to maintain. Expanding a system’s functionality can also be difficult when smelly code is present.

Code smells can go undetected a lot of the times. That is why we are now trying to use this automated tool to make it easier for us to detect code smells. Code smells are not bugs or errors. Instead, they are deficiencies in the coding that can slow down processing, increased risk of failure and errors while making the program vulnerable to bugs in the future.

Our objective is to find and fix most all code smells in the systems we delivery to clients. And as our developers and development managers are confronted with fixing the various types of common code smells identified by our automation tool, we expect that rates of occurrence of these code smells showing up in our future coding to go way down over time.

SonarQube

The fourth tool we are now evaluating to add to our toolset is SonarQube. It provides functionality for all three of the above areas of code quality analysis provided by the prior three tools. We believe this tool is a much more integrated and complete code analysis tool that likely will help us in a variety of ways to achieve our development process automation and quality goals and objectives for 2022. Among the areas it supports are the following:

- Code Quality

- Release Quality Code

- Maintainability (code smells)

- Code Security

- Security Analysis

- Open Web Application Security Project Top 10 issues

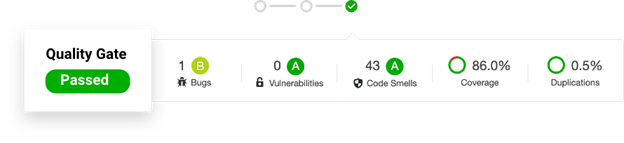

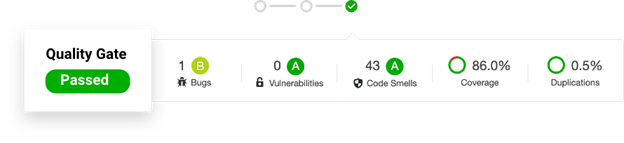

SonarQube provides Quality Gates that tell you at every analysis stage whether your code is ready to release. Quality Gates show your project releasability. Right out of the box, SonarQube provides clear signals whether your code commits are clean, projects are releasable, and how well our development organization (for each project) is hitting the mark. It provides clear feedback. And if you’re not hitting the mark on various projects, we know immediately what’s wrong and how to fix it. These are tremendous advantages across our development organization and we are hoping to have our full evaluation of the tool done by the end of 2021, so we can start the new year with this advanced tool added to our toolset.

Above is a sample of the kinds of summary reports available from SonarQube for a specific project. Similar reports are available that aggregate across all projects. We won’t be surprised anymore at the last minute with unexpected quality problems. SonarQube gives us a clear releasability indicator at every build. It keeps quality front and center throughout the development cycle. These quality gates coalesce our development teams around a shared vision of quality. Everyone knows the standard and whether it’s being met.

For failed quality gates, the error conditions show you what needs fixing. It provides a single source of truth for all the coding and languages in your project. SonarQube continually analyzes our code and advises us when corrective action is needed. Every supported language in SonarQube includes dozens of rules that offer clear resolution guidance helping our developers to learn clean code practices with every new code commit!

The SonarQube homepage highlights the code quality and code security of the new code changed or added that day so we can focus on what’s important: making sure the code our developers write today is solid. Obviously, developers own the quality of the new code they write today. Keeping new code clean translates into our development teams achieving more throughput and less disruption later.

Conclusion

There are other new tools and frameworks we are evaluating to see if they provide different coverages for any of our normal coding circumstances. Also, on occasion, one of our clients might direct us to another new coding analysis tool and in that case, we try to use that opportunity to learn to use this new tool and find out if it is useful to us beyond this client’s project.

We are convinced that no one tool will do the entire and best job for us, so we expect to have a growing set of tools that handle different scenarios and different types of coding and provide different warnings and outputs for our developers to work with. What is the ideal combination to run in any particular set of circumstances? The answer is of course changing rapidly with time as new and better tools are being released and new and better development methods and processes are coming into being. We do know that it will be more than a single tool for sure.

Yes, running more than one tool is more expensive and time consuming than running a single tool and leaving it at that. But our cost benefit analysis to date indicates strongly that several tools in combination is much more cost effective, leading to much better automation and performance of our coding process and leading to much higher quality of our delivered code. We expect to be using at least 2-4 tools in combination The different tools are finding different types of errors. Yes, there are some overlaps but by and large there are many different errors found only by each tool.

The bottom line for Macrosoft is we intend to continue our in-depth evaluations of all new code analysis tools as they come into the marketplace so we can make sure we can achieve our dual goals of automation and quality of our coding processes.

[1] We would be happy to refer you to any of our clients to hear first hand what they think about all this.

[2] These development managers are stationed at one of our two international development centers in Trivandrum India and Lahore Pakistan.

[3] Keep in mind we use agile scrum development process, so development work by individual coders is continually being submitted for review.

By G.N. Shah, Ronald Mueller | January 18th, 2022 | General

Recent Blogs

Advantages of Technology and IT Companies Partnering with Staffing Firms Offering Visa Sponsorship

Read Blog

CCM in the Cloud: The Advantages of Cloud-Based Customer Communication Management

Read Blog

The Rise of Intelligent Automation: A Roadmap for Success

Read Blog

Home

Home Services

Services