Two of the main lines of business for Macrosoft are Enterprise Software Development and Legacy Migration. In Enterprise Software Development we build new enterprise systems based on requirements provided by our clients. In Legacy Migration we migrate a client’s older legacy system to a new state-of-the-art enterprise application. In both cases, we do most of this development work out of our two international development centers. We have over 200 developers engaged in these work efforts. We have several tech stacks that we work with routinely including .Net; Java; Python, as well as other Open-source platforms.

One of the ‘hidden’ gems in all this development work is our software quality assurance teams consisting now of about 40+ individuals and growing, divided among our two development centers. This paper highlights the great work and competencies of these QA teams. We detail all the different areas of software quality assurance that our teams work in.

Our Enterprise Development and Legacy Migration lines of business have been growing very significantly over the last several years, and one of the main reasons for that is the quality and workmanship of the new systems we have been building for our clients. In addition, these new systems are being delivered to clients on time and within budget in nearly all cases. In turn, one of the key reasons we have been able to accomplish this is because of our exceptional teams of QA experts with varied skills across all the relevant QA areas, as detail below.

We are writing this paper to highlight these outstanding QA skills and to make our clients and potential clients aware of these capabilities. In essence our QA capabilities as a company stem from two major sources:

- The skills and depth of experience of the QA teams we have employed at our two international development centers.

- The state-of-art QA toolset that we use to conduct this work and the continual evaluation and assessment of new QA automation tools available in the marketplace that will make our QA teams more efficient and productive.

Finally, with this paper we want to make it known that we are ready and able to take on stand-alone QA work: whether in the form of fixed priced projects or as teams of QA individuals who can meld in with the client’s development teams. Please contact us to discuss any such possibilities.

SQA Process

The objective of Quality Assurance is of course to minimize produced defects, and thereby increase software system quality. Another way to put this is to complete projects on time and within budget AND with high quality. This is especially relevant and important in the final system released to the client, but it is also critical throughout the development process. Minimizing defects throughout the development process, for each release, directly links to much higher programming efficiency and productivity.

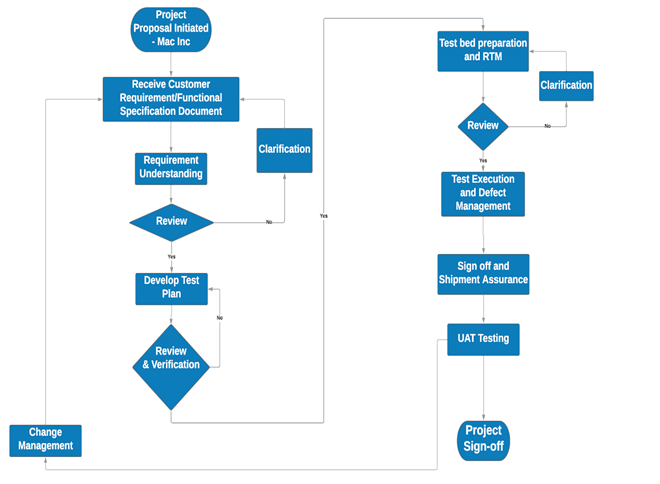

Below is a high-level flow chart of the QA process we use for new system development. It shows the major points of QA interactions: test plan development; test bed preparation and RTM (requirements traceability matrix) preparation; test execution and defect management; and finally, UAT testing. Those are the 4 points in the development and testing process where our QA teams hold the keys to a successful system development project.

QA Types and the Tools We Use

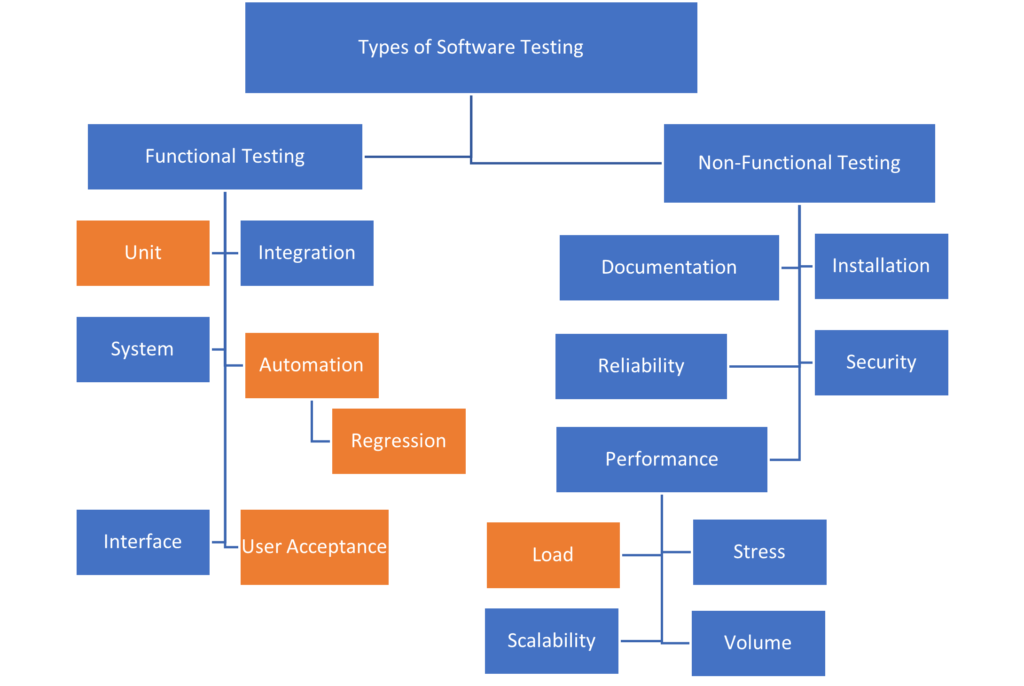

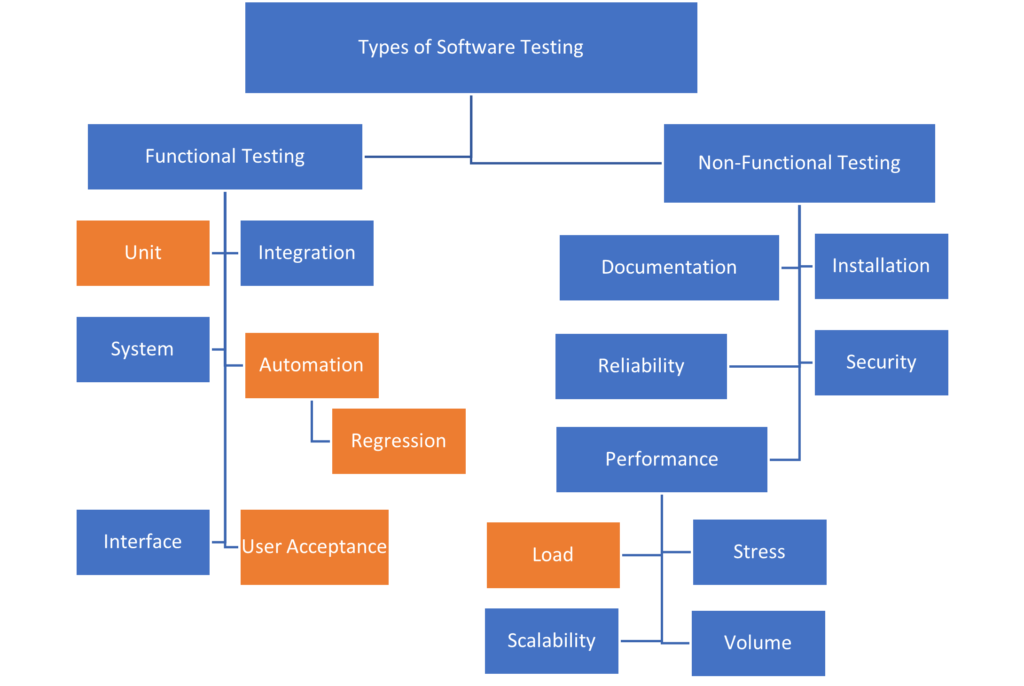

We present in this section some of the areas of QA work where our teams operate. This is not meant to be a topology of all the 100’s of different flavors and strains of QA work that can and do exist. Rather, we want to highlight some of the most important areas in which our QA teams do their work, and then in the next section highlight the many tools we use in conducting that work.

One key point we are trying to emphasize here is – we always strive to use best-in-class tools for all our QA work, and as importantly, we are always evaluating the market for new and better tools as they come along. We want our QA toolset to always be the best available thus enabling our QA teams to conduct their work with the highest levels of efficiency and automation. We believe that is a win-win for both us and our clients.

The table below shows the current set of 9 tools we use for the different areas of QA work we are currently working in. This is our standard set of tools, but if a client needs us to adopt a tool different from this set, we will adopt that for the purpose of that client’s projects.

As noted above we are always evaluating new tools that might provide better functionality and if we find such a tool, we will quickly convert over to it to take advantage of any significant improvements in automation, efficiency, and productivity it can provide us.

| QA Type | Toolset we use | Description |

| Manual Testing | Depending on client and project some of the work we do will consist of manual testing efforts | |

| Test Automation | Cypress.io | Cypress is a next generation front end testing tool built for the modern web. We address the key pain points developers and QA engineers face when testing modern applications. We make it possible to: Set up tests |

| Selenium Tester | The Selenium testing tool is used to automate tests across browsers for web applications. It’s used to ensure high-quality web applications — whether they are responsive, progressive, or regular. Selenium is an open-source tool. Testers use Selenium because it’s easy to generate test scripts to validate functionality. We use Selenium WebDriver for creating and executing our test scripts. | |

| Katalon Studio | The software is built on top of the open-source automation frameworks Selenium and Appium with a specialized IDE interface for web, API, mobile and desktop application testing. | |

| TestComplete | TestComplete, developed by SmartBear Software, offers support to a wide range of technologies such as .Net, Delphi, C++Builder, Java, Visual Basic, HTML5, Flash, Flex, Silverlight Desktop, The Web and Mobile systems. TestComplete helps testers develop their test cases in various scripting languages like JavaScript, Python, VBScript, Delphi Script. It is available with two licenses and a free trial version valid for 30 days. | |

| Continuous Software testing in real time – Regression testing If you need to make changes in any component, module, or function, you must see if the whole system functions properly after those modifications. Testing of the whole system after such modifications is known as regression testing. | Zephyr | Zephyr is a testing solution designed to keep pace with continuous software delivery, and teams focused on unparalleled performance and quality. Real time test management platform for enterprises. Track quality, manage global teams, integrate with JIRA and report in real-time |

| Load Testing Load testing is one kind of performance testing that tests how much load a system can take before the software performance begins to degrade. By running load tests, we can know the capacity of taking load of a system. | Apache Jmeter | Apache Jmeter is an Apache project that can be used as a load testing tool for analyzing and measuring the performance of a variety of services, with a focus on web applications |

| HP Micro Focus Load Runner | HP Micro Focus Load Runner is used to test applications, measuring system behavior and performance under load. LoadRunner can simulate thousands of users concurrently using application software, recordingrecording, and later analyzing the performance of key components of the application | |

| Blaze Meter | Blaze Meter provides a performance testing tool that you then configure to your needs, be it in the form of a load test or stress test or something else. This is where you scale up your test to run across multiple engines and even from multiple locations around the world, if you like | |

| Eclipse | The Eclipse is used as an IDE for creating Test scripts for Selenium and Platform is tested during every build by an extensive suite of automated tests. These tests are written using the JUnit TestNGtest framework. | |

| Unit Testing Testing each component or module of your software project is known as unit testing. To perform this kind of testing, knowledge of programming is necessary. So only programmers do this kind of tests, not testers. You must do a great deal of unit testing as you should test each unit of code in your project. The cost of unit testing depends on the level of code coverage that needs to be met. | Visual Studio Test Manager | The Test Explorer window helps developers create, manage, and run unit tests. You can use the Microsoft unit test framework or one of several third-party and open-source frameworks. Visual Studio is also extensible and opens the door for third-party unit testing adapters such as NUnit and xUnit.net. |

| UAT Testing | The client purchasing software will perform user acceptance testing to see if the software can be accepted or not by checking whether the software meets all client’s requirements and preferences. If software doesn’t meet all requirements or if your client doesn’t like something in the app, they may request to make changes before accepting the project. We support alpha testing at the client developer’s site. |

New Tools Being Considered

This section briefly describes some new methods and tools we are now evaluating in our QA work, to provide further improvements in efficiency, productivity, and automation.

- Besides performing tests, measuring the effectiveness of the tests is also important, and test coverage tells the effectiveness of your tests. Istanbul is a good tool for measuring test coverage and can be used for JavaScript software projects.

- There can be undetected errors in an application even after it’s launched, which of course annoys client’s users. Real-time error-checking tools such as Sentry and Newrelic automatically find errors and can notify us directly so users would not need to report bugs.

- You can also use automated code grading tools. Automated code grading tools like Sonarqube and Codebeat help improve quality of code by showing potential issues in an application. These tools can also help fix bugs in less time. After analyzing code, they provide valuable information for code quality enhancements.

Please note, our QA and development teams already use several packages for code standardization and quality control, and these new tools presented here are being considered only to the extent they can improve on the capability we already have.

- You can use programs called Linters to check if the code meets the specified coding convention rules for a project. A linter can save a lot of time as manually checking the code written by several developers is generally very time-consuming.

Final Comments

We conclude our review of Macrosoft’s QA team capabilities. As noted earlier we are now expanding our enterprise work to include stand-along QA work for our clients. Contact us to find out more. We will be happy to discuss all the new things we are now considering to further advance our QA capabilities.

ByG.N. Shah, Venkatraman Rajaram, Ronald Mueller | Published on January 26th, 2022 | Last updated on July 16th, 2024 | Enterprise Services

Home

Home Services

Services